Abstract

Artificial Intelligence (AI) systems are increasingly embedded in critical decision-making processes across industries such as healthcare, finance, manufacturing, and transportation. Designing effective AI models requires balancing competing priorities, as improvements in one aspect of a system often lead to compromises in another. Among the most significant trade-offs in AI model development are accuracy versus interpretability and speed versus complexity. These trade-offs influence not only model performance but also system reliability, usability, and regulatory compliance. This paper examines these key design tensions and illustrates how organizations make strategic decisions when selecting AI models for real-world applications.

Introduction

AI model design is not simply about achieving the highest possible prediction accuracy. In practical environments, models must satisfy multiple constraints including explainability, computational efficiency, scalability, and operational reliability. As AI systems become more complex, developers frequently encounter situations where optimizing one property leads to deterioration in another.

For example, highly accurate deep learning models often operate as opaque systems with limited explainability, while simpler models that are easy to interpret may not capture complex relationships in data. Similarly, advanced models with sophisticated architectures may produce superior predictions but require substantial computational resources, slowing down inference speed in real-time applications.

Understanding these trade-offs is critical for practitioners who must design AI systems aligned with business goals, regulatory standards, and operational constraints.

The Nature of Trade-offs in AI Systems

Trade-offs arise because AI systems operate within finite computational resources, limited data quality, and varying operational requirements. Every model design involves decisions regarding:

- Model architecture

- Training methodology

- Feature engineering

- Deployment environment

- Latency requirements

These decisions shape the balance between performance, interpretability, scalability, and computational efficiency. A model that performs exceptionally well in controlled experimental settings may fail in production if it cannot meet operational constraints such as real-time processing or explainability requirements.

Two of the most prominent trade-offs in AI model design are accuracy versus interpretability and speed versus complexity.

Accuracy vs Interpretability

Understanding the Trade-off

Accuracy refers to the ability of a model to make correct predictions, while interpretability refers to the degree to which humans can understand how a model arrives at its decisions.

In general, as models become more complex, their predictive power increases but their internal reasoning becomes more difficult to explain. This creates a challenge in domains where transparency and accountability are essential.

Simple models such as linear regression or decision trees are easily interpretable because their structure directly reveals how input variables influence outcomes. In contrast, models such as deep neural networks contain millions or even billions of parameters, making their internal operations difficult to interpret.

Interpretable Models

Interpretable models include:

- Linear regression

- Logistic regression

- Decision trees

- Rule-based systems

These models allow practitioners to directly observe the relationship between features and predictions. For example, in a logistic regression model predicting loan approval, each coefficient clearly indicates how a specific factor such as income or credit score influences the probability of approval.

However, interpretable models often struggle with complex patterns in large datasets.

High-Accuracy but Low-Interpretability Models

More advanced models include:

- Deep neural networks

- Gradient boosting models

- Random forests

- Transformer architectures

These models can capture complex nonlinear relationships and interactions between variables, leading to higher predictive accuracy. However, their complexity makes it difficult to explain how specific predictions are generated.

Real-World Illustration: Healthcare Diagnostics

In medical imaging, deep learning models can detect diseases such as cancer with extremely high accuracy by analyzing complex visual patterns in X-rays or MRI scans. However, clinicians often require explanations for these predictions.

A model that simply outputs a probability score without explanation may not be trusted by healthcare professionals. To address this issue, researchers use explainability techniques that highlight regions of an image that influenced the diagnosis.

This example illustrates the challenge: achieving high accuracy while maintaining sufficient interpretability for decision-making.

Real-World Illustration: Financial Credit Scoring

Financial institutions often prefer interpretable models for credit scoring because regulations require lenders to explain why a loan application was rejected.

While a deep learning model may produce slightly better predictive accuracy, a logistic regression model may be preferred because it clearly shows how factors such as income, employment history, and debt influence approval decisions.

In such cases, interpretability becomes more valuable than marginal improvements in accuracy.

Bridging the Gap

To address this trade-off, researchers have developed techniques in Explainable AI (XAI) that attempt to explain complex models. Methods such as SHAP values and LIME help identify which features contributed most to a specific prediction.

These approaches allow organizations to benefit from complex models while improving transparency.

Speed vs Complexity

Understanding the Trade-off

Another critical design trade-off involves computational speed versus model complexity.

Complex models often require significant computational resources, longer training times, and slower inference speeds. In many real-world applications, predictions must be generated in milliseconds, making computational efficiency a critical factor.

Simple Models and High-Speed Inference

Simple models generally provide faster predictions because they require fewer computations. Examples include:

- Linear models

- Shallow decision trees

- Naive Bayes classifiers

These models are often used in applications where speed is essential.

Complex Models and Computational Overhead

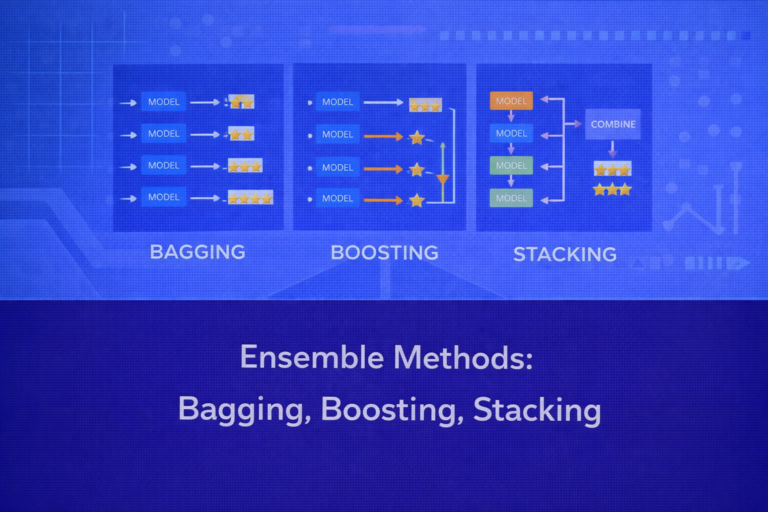

Advanced models such as deep neural networks and ensemble methods involve multiple layers of computation, large numbers of parameters, and extensive feature interactions. While these models often produce better predictions, they require more processing power and memory.

For instance, large language models and computer vision systems may require specialized hardware such as GPUs or TPUs to perform inference efficiently.

Real-World Illustration: Autonomous Vehicles

Autonomous driving systems rely on AI models to detect pedestrians, traffic signs, and obstacles in real time. These decisions must be made within milliseconds.

Highly complex models may achieve slightly better detection accuracy but could introduce latency that compromises safety. Engineers must therefore balance model complexity with the requirement for rapid decision-making.

In practice, optimized models are deployed that achieve acceptable accuracy while maintaining real-time performance.

Real-World Illustration: Online Recommendation Systems

E-commerce platforms such as streaming services or online retailers generate personalized recommendations for millions of users simultaneously.

Complex recommendation models may produce better personalization but could slow down response times. To maintain user experience, companies often deploy simplified versions of models for real-time inference while using more complex models offline for training and analysis.

This layered approach balances speed and complexity effectively.

Additional Trade-offs in AI Systems

Beyond the two primary trade-offs discussed above, AI practitioners frequently encounter other design considerations, including:

- Model performance versus energy consumption

- Data quantity versus data quality

- Automation versus human oversight

- Scalability versus model sophistication

These trade-offs highlight the multidimensional nature of AI system design.

Strategies for Managing Trade-offs

Organizations employ several strategies to balance competing priorities in AI systems.

One approach is model simplification, where complex models are distilled into smaller models that retain most of the predictive power while reducing computational requirements.

Another strategy is hybrid modeling, where interpretable models are used alongside complex models to provide explanations and validation.

Optimization techniques such as model pruning, quantization, and hardware acceleration can also improve inference speed without significantly reducing accuracy.

Finally, organizations often adopt context-driven model selection, choosing models based on specific application requirements rather than purely on predictive performance.

Importance of Understanding Trade-offs

Understanding trade-offs is essential for building AI systems that function effectively in real-world environments. Overemphasizing accuracy without considering interpretability or speed may lead to systems that are impractical or untrustworthy.

Successful AI systems strike a balance between predictive performance, transparency, and operational efficiency. This balance depends heavily on the context in which the AI system operates.

For example, medical and financial systems may prioritize interpretability and accountability, while recommendation systems may prioritize scalability and prediction accuracy.

Conclusion

AI model development involves navigating multiple trade-offs that influence system performance, usability, and trustworthiness. Among the most significant of these are the trade-offs between accuracy and interpretability and between speed and complexity.

Highly accurate models often rely on complex architectures that reduce transparency, while simpler models provide clearer explanations but may lack predictive power. Similarly, sophisticated models can improve accuracy but may introduce computational overhead that limits real-time performance.

Designing effective AI systems requires carefully evaluating these competing factors and selecting models that align with the operational, ethical, and regulatory requirements of the application domain. As AI continues to expand across industries, understanding and managing these trade-offs will remain a critical skill for practitioners and decision-makers alike.