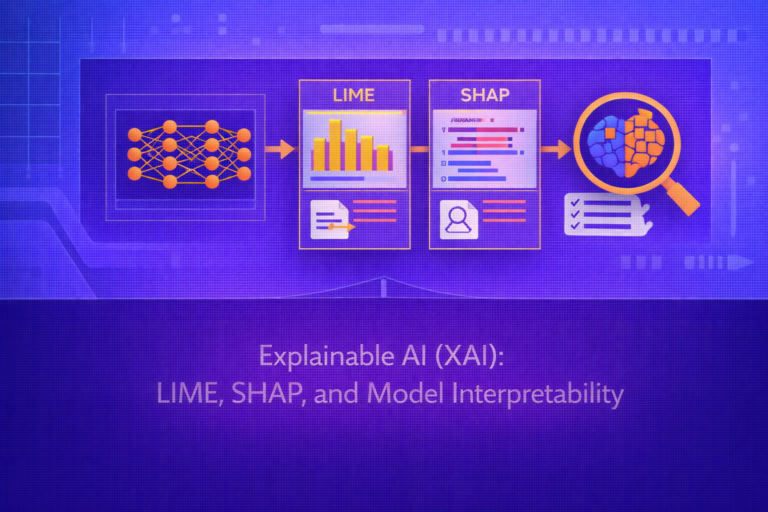

Explainable AI (XAI): LIME, SHAP, and Model Interpretability

Explainable AI (XAI) is the field concerned with making machine learning models more understandable to humans. As predictive models become more complex, especially in high-stakes domains, there is growing need to explain why a model made a prediction, which features…