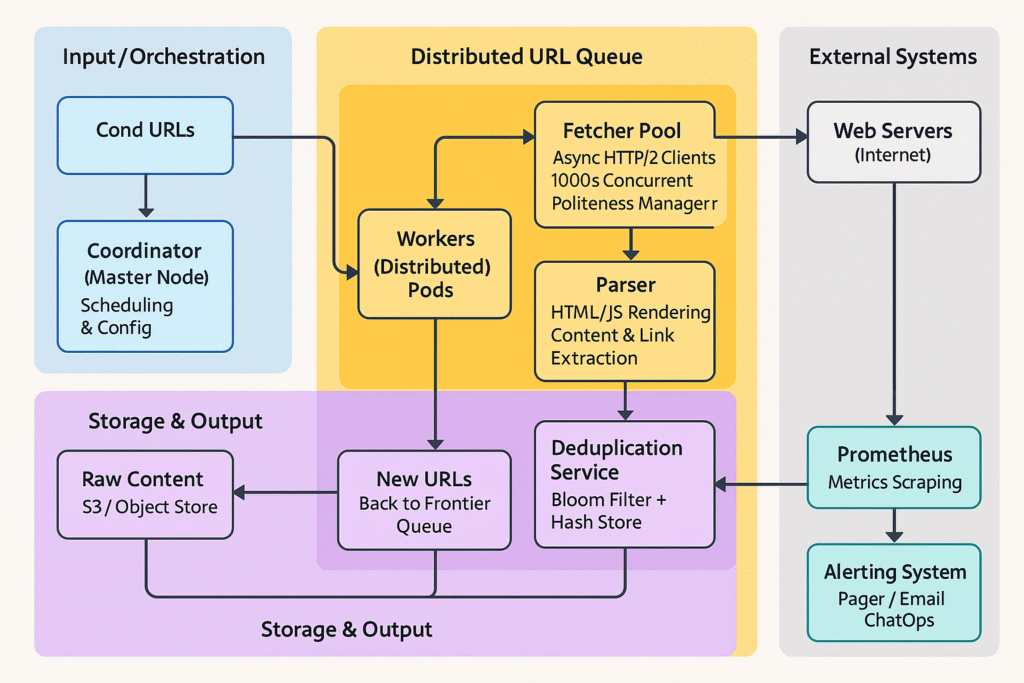

1. Overview

This case study outlines a scalable, distributed web crawler architecture capable of processing billions of pages daily. It incorporates fault tolerance, politeness policies, and deduplication. The implementation leverages .NET 8 for high performance, with components for orchestration, fetching, parsing, and storage. Key libraries include:

- HttpClient for async fetching.

- AngleSharp for HTML parsing.

- Confluent.Kafka for distributed URL frontier.

- Redis for caching (robots.txt, Bloom filters).

- Cassandra for persistent storage.

- Serilog for structured logging.

- MediatR for CQRS-like command handling.

The system deploys on Kubernetes for auto-scaling, with Docker containers for each service.

2. Functional Requirements

- Discovery: Start from seed URLs and recursively fetch linked pages.

- Fetching: Download page content (HTML, images, etc.) with support for HTTP/HTTPS, handling redirects and retries.

- Parsing: Extract URLs, metadata (title, description), and structured data (e.g., via XPath/CSS selectors).

- Storage: Persist raw content, extracted URLs, and indexes in a durable store.

- Deduplication: Avoid re-crawling identical pages using hashes or fingerprints.

- Politeness: Respect crawl delays, robots.txt, and rate limits per domain.

- Monitoring: Track crawl statistics (e.g., pages fetched, errors) and support pausing/resuming.

3. Non-Functional Requirements

- Scalability: Handle 100M+ pages/day, scaling to thousands of nodes.

- Availability: 99.9% uptime with fault tolerance (e.g., node failures).

- Latency: Fetch < 5s p99; process 1K pages/sec per node.

- Efficiency: < 10% duplicate fetches; bandwidth < 1TB/hour per cluster.

- Compliance: Adhere to legal/ethical standards (e.g., no scraping protected content).

- Eventual Consistency: Acceptable for URL frontier; strong consistency for deduplication.

4. Estimation (Back-of-the-Envelope)

- Compute: 1K nodes × 10 cores (fetch + parse).

- Input: 1B pages to crawl; 10 new links/page → 10B URLs/year.

- Throughput: 100M pages/day → ~1,157 QPS fetch; 10:1 read:write ratio.

- Storage: 1B pages × 100KB avg → 100TB raw; 10TB indexed (compressed).

- Network: 100M pages/day × 100KB → ~1PB/month bandwidth.

5. Core Design Decisions

- URL Frontier: Kafka topics partitioned by domain hash for ordered, polite crawling.

- Fetching: Async HttpClient with Polly for retries/backoff; domain-specific rate limiting via Redis.

- Parsing/Deduplication: AngleSharp for extraction; Redis Bloom filter for O(1) checks; SHA-256 fingerprints.

- Storage: Raw content to S3 (via AWS SDK); Index to Elasticsearch (via NEST).

- Orchestration: Coordinator as a hosted service; Workers as background services.

- Fault Tolerance: Circuit breakers, dead-letter Kafka topics, health checks.

6. Detailed Data Flow

7. Production-Grade C# .NET Core Implementation

Project: WebCrawler.sln (Multi-project solution: Coordinator, Worker, Core, Infrastructure)

src/

├── WebCrawler.Coordinator/ (ASP.NET Core hosted service for master)

├── WebCrawler.Worker/ (Background service for fetch/parse)

├── WebCrawler.Core/ (Models, interfaces, commands)

├── WebCrawler.Infrastructure/ (Kafka, Redis, Cassandra, AWS S3, Elasticsearch)

└── WebCrawler.Monitoring/ (Prometheus metrics, Serilog)7.1 Core Models and Commands (WebCrawler.Core)

using MediatR;

public record UrlToCrawl(string Url, int Priority = 1, DateTime? LastCrawled = null);

public record CrawlResult(string Url, string? Content, string? Error, DateTime FetchedAt);

public class PageMetadata

{

public string Url { get; set; } = string.Empty;

public string Title { get; set; } = string.Empty;

public string ContentHash { get; set; } = string.Empty;

public List<string> ExtractedUrls { get; set; } = new();

public DateTime CrawledAt { get; set; }

}

public record SeedUrlsCommand(List<string> Urls) : IRequest<Unit>;

public record EnqueueUrlsCommand(List<UrlToCrawl> Urls) : IRequest<Unit>;7.2 Distributed Frontier (Kafka Producer/Consumer) (Infrastructure/Services/UrlFrontierService.cs)

using Confluent.Kafka;

using WebCrawler.Core;

using Microsoft.Extensions.Logging;

using Microsoft.Extensions.Options;

public interface IUrlFrontierService

{

Task ProduceUrlsAsync(List<UrlToCrawl> urls, CancellationToken ct = default);

IAsyncEnumerable<UrlToCrawl> ConsumeUrlsAsync(string domainFilter = null, CancellationToken ct = default);

}

public class KafkaUrlFrontierService : IUrlFrontierService

{

private readonly IProducer<Null, string> _producer;

private readonly IConsumer<Ignore, string> _consumer;

private readonly ILogger<KafkaUrlFrontierService> _logger;

public KafkaUrlFrontierService(IOptions<KafkaOptions> options, ILogger<KafkaUrlFrontierService> logger)

{

var producerConfig = new ProducerConfig { BootstrapServers = options.Value.BootstrapServers };

_producer = new ProducerBuilder<Null, string>(producerConfig).Build();

var consumerConfig = new ConsumerConfig

{

BootstrapServers = options.Value.BootstrapServers,

GroupId = "crawler-workers",

AutoOffsetReset = AutoOffsetReset.Earliest

};

_consumer = new ConsumerBuilder<Ignore, string>(consumerConfig).Build();

_consumer.Subscribe("url-frontier");

_logger = logger;

}

public async Task ProduceUrlsAsync(List<UrlToCrawl> urls, CancellationToken ct = default)

{

var tasks = urls.Select(async url =>

{

var message = new Message<Null, string> { Value = JsonSerializer.Serialize(url) };

var deliveryResult = await _producer.ProduceAsync("url-frontier", message, ct);

_logger.LogInformation("Produced URL: {Url} to partition {Partition}", url.Url, deliveryResult.Partition);

});

await Task.WhenAll(tasks);

}

public async IAsyncEnumerable<UrlToCrawl> ConsumeUrlsAsync(string? domainFilter = null, [EnumeratorCancellation] CancellationToken ct = default)

{

while (!ct.IsCancellationRequested)

{

try

{

var consumeResult = _consumer.Consume(ct);

var url = JsonSerializer.Deserialize<UrlToCrawl>(consumeResult.Message.Value);

if (domainFilter == null || url.Url.Contains(domainFilter))

{

yield return url;

}

_consumer.Commit(consumeResult);

}

catch (OperationCanceledException)

{

yield break;

}

catch (Exception ex)

{

_logger.LogError(ex, "Consume error");

}

await Task.Delay(100, ct); // Politeness batching

}

}

public void Dispose() => _consumer?.Close();

}

public class KafkaOptions

{

public string BootstrapServers { get; set; } = "localhost:9092";

}7.3 Fetcher Service (Infrastructure/Services/FetcherService.cs)

using Polly;

using Polly.Extensions.Http;

using AngleSharp;

using AngleSharp.Dom;

using Microsoft.Extensions.Caching.Distributed;

using WebCrawler.Core;

using Microsoft.Extensions.Logging;

using System.Security.Cryptography;

using System.Text;

public interface IFetcherService

{

Task<CrawlResult> FetchAsync(string url, CancellationToken ct = default);

}

public class FetcherService : IFetcherService

{

private readonly HttpClient _httpClient;

private readonly IDistributedCache _cache; // Redis for robots.txt

private readonly ILogger<FetcherService> _logger;

private readonly IBrowsingContext _context = BrowsingContext.New(AngleSharp.Configuration.Default.WithDefaultLoader());

public FetcherService(HttpClient httpClient, IDistributedCache cache, ILogger<FetcherService> logger)

{

_httpClient = httpClient;

_cache = cache;

_logger = logger;

// Polly retry policy for resilience

_httpClient.AddPolicyHandler(HttpPolicyExtensions

.HandleTransientHttpError()

.OrResult(msg => msg.StatusCode == System.Net.HttpStatusCode.TooManyRequests)

.WaitAndRetryAsync(3, retryAttempt => TimeSpan.FromSeconds(Math.Pow(2, retryAttempt)), // Exponential backoff

onRetry: (outcome, timespan, retryCount, context) => _logger.LogWarning("Retry {RetryCount} for {Url} after {Timespan}s", retryCount, outcome.RequestMessage?.RequestUri, timespan.TotalSeconds)));

}

public async Task<CrawlResult> FetchAsync(string url, CancellationToken ct = default)

{

// Check robots.txt (cached in Redis)

var domain = new Uri(url).Host;

var robotsKey = $"robots:{domain}";

var robotsAllowed = await _cache.GetStringAsync(robotsKey, ct) != "disallowed";

if (!robotsAllowed)

{

_logger.LogWarning("Blocked by robots.txt: {Url}", url);

return new CrawlResult(url, null, "Blocked by robots.txt", DateTime.UtcNow);

}

try

{

var response = await _httpClient.GetAsync(url, ct);

response.EnsureSuccessStatusCode();

var content = await response.Content.ReadAsStringAsync(ct);

// Rate limit simulation (per domain via Redis semaphore)

var rateKey = $"rate:{domain}";

var current = await _cache.GetStringAsync(rateKey, ct) ?? "0";

var count = int.Parse(current);

if (count > 10) // 10 reqs/min per domain

{

await Task.Delay(60000, ct); // Wait 1 min

await _cache.RemoveAsync(rateKey, ct);

}

else

{

await _cache.SetStringAsync(rateKey, (count + 1).ToString(), new DistributedCacheEntryOptions { AbsoluteExpirationRelativeToNow = TimeSpan.FromMinutes(1) }, ct);

}

return new CrawlResult(url, content, null, DateTime.UtcNow);

}

catch (Exception ex)

{

_logger.LogError(ex, "Fetch failed for {Url}", url);

return new CrawlResult(url, null, ex.Message, DateTime.UtcNow);

}

}

}7.4 Parser and Deduplication (Infrastructure/Services/ParserService.cs)

using AngleSharp.Dom;

using WebCrawler.Core;

using Microsoft.Extensions.Caching.Distributed;

using System.Security.Cryptography;

using System.Text;

public interface IParserService

{

Task<PageMetadata> ParseAsync(CrawlResult result, CancellationToken ct = default);

}

public class ParserService : IParserService

{

private readonly IDistributedCache _bloomCache; // Redis for Bloom filter simulation

private readonly IBrowsingContext _context;

public ParserService(IDistributedCache bloomCache)

{

_bloomCache = bloomCache;

_context = BrowsingContext.New(AngleSharp.Configuration.Default);

}

public async Task<PageMetadata> ParseAsync(CrawlResult result, CancellationToken ct = default)

{

if (string.IsNullOrEmpty(result.Content))

return new PageMetadata { Url = result.Url };

var document = await _context.OpenAsync(req => req.Content(result.Content), ct);

var title = document.QuerySelector("title")?.TextContent ?? string.Empty;

var links = document.QuerySelectorAll("a[href]").Select(a => a.GetAttribute("href")).Where(href => !string.IsNullOrEmpty(href)).ToList();

// Normalize and filter links (e.g., absolute URLs)

var extractedUrls = links.Select(link => new Uri(new Uri(result.Url), link).ToString()).Take(100).ToList(); // Limit outlinks

// Deduplication: Simple hash check (extend to Bloom for scale)

var contentHash = ComputeSha256Hash(result.Content);

var bloomKey = $"bloom:{contentHash.GetHashCode()}";

var exists = await _bloomCache.GetStringAsync(bloomKey, ct) != null;

if (exists)

{

return new PageMetadata { Url = result.Url, Title = title }; // Skip storage

}

await _bloomCache.SetStringAsync(bloomKey, "seen", new DistributedCacheEntryOptions { SlidingExpiration = TimeSpan.FromDays(30) }, ct);

return new PageMetadata

{

Url = result.Url,

Title = title,

ContentHash = contentHash,

ExtractedUrls = extractedUrls,

CrawledAt = result.FetchedAt

};

}

private static string ComputeSha256Hash(string content) =>

Convert.ToBase64String(SHA256.HashData(Encoding.UTF8.GetBytes(content)));

}7.5 Storage Services (Infrastructure/Services/StorageService.cs)

using Amazon.S3;

using Nest; // Elasticsearch .NET client

using WebCrawler.Core;

public interface IStorageService

{

Task StoreRawAsync(string url, string content, CancellationToken ct = default);

Task IndexMetadataAsync(PageMetadata metadata, CancellationToken ct = default);

}

public class AwsS3StorageService : IStorageService // Partial impl; extend for ES

{

private readonly IAmazonS3 _s3Client;

public AwsS3StorageService(IAmazonS3 s3Client) => _s3Client = s3Client;

public async Task StoreRawAsync(string url, string content, CancellationToken ct = default)

{

var key = $"crawl/{DateTime.UtcNow:yyyy/MM/dd}/{url.GetHashCode()}.warc";

using var stream = new MemoryStream(Encoding.UTF8.GetBytes(content));

await _s3Client.PutObjectAsync(new PutObjectRequest

{

BucketName = "crawler-raw",

Key = key,

InputStream = stream

}, ct);

}

public async Task IndexMetadataAsync(PageMetadata metadata, CancellationToken ct = default)

{

// Elasticsearch indexing (using NEST)

// var response = await _elasticClient.IndexDocumentAsync(metadata);

// if (!response.IsValid) _logger.LogError("Index failed: {Url}", metadata.Url);

}

}7.6 Worker Background Service (WebCrawler.Worker/WorkerService.cs)

using MediatR;

using WebCrawler.Core;

using WebCrawler.Infrastructure.Services;

using Microsoft.Extensions.Hosting;

using Microsoft.Extensions.Logging;

public class CrawlerWorkerService : BackgroundService

{

private readonly IUrlFrontierService _frontier;

private readonly IFetcherService _fetcher;

private readonly IParserService _parser;

private readonly IStorageService _storage;

private readonly IMediator _mediator;

private readonly ILogger<CrawlerWorkerService> _logger;

public CrawlerWorkerService(

IUrlFrontierService frontier,

IFetcherService fetcher,

IParserService parser,

IStorageService storage,

IMediator mediator,

ILogger<CrawlerWorkerService> logger)

{

_frontier = frontier;

_fetcher = fetcher;

_parser = parser;

_storage = storage;

_mediator = mediator;

_logger = logger;

}

protected override async Task ExecuteAsync(CancellationToken stoppingToken)

{

await foreach (var urlToCrawl in _frontier.ConsumeUrlsAsync(null, stoppingToken))

{

try

{

var result = await _fetcher.FetchAsync(urlToCrawl.Url, stoppingToken);

if (!string.IsNullOrEmpty(result.Content))

{

var metadata = await _parser.ParseAsync(result, stoppingToken);

await _storage.StoreRawAsync(result.Url, result.Content, stoppingToken);

await _storage.IndexMetadataAsync(metadata, stoppingToken);

// Enqueue new URLs

var newUrls = metadata.ExtractedUrls.Select(u => new UrlToCrawl(u, urlToCrawl.Priority)).ToList();

await _mediator.Send(new EnqueueUrlsCommand(newUrls), stoppingToken);

}

}

catch (Exception ex)

{

_logger.LogError(ex, "Worker error for {Url}", urlToCrawl.Url);

// Send to dead-letter topic

}

}

}

}7.7 Coordinator (ASP.NET Core Hosted Service) (WebCrawler.Coordinator/Program.cs)

var builder = WebApplication.CreateBuilder(args);

// Add services

builder.Services.AddMediatR(cfg => cfg.RegisterServicesFromAssembly(typeof(SeedUrlsCommand).Assembly));

builder.Services.AddSingleton<IUrlFrontierService, KafkaUrlFrontierService>();

builder.Services.AddHttpClient<IFetcherService, FetcherService>();

builder.Services.AddStackExchangeRedisCache(options => options.Configuration = "localhost:6379");

builder.Services.AddSingleton<IAmazonS3>(sp => new AmazonS3Client()); // AWS config via appsettings

builder.Services.AddHostedService<CrawlerWorkerService>(); // Or deploy as separate app

// Coordinator endpoint for seeding

var app = builder.Build();

app.MapPost("/seed", async (SeedUrlsCommand cmd, IMediator mediator, IUrlFrontierService frontier) =>

{

await mediator.Send(cmd);

var urls = cmd.Urls.Select(u => new UrlToCrawl(u)).ToList();

await frontier.ProduceUrlsAsync(urls);

return Results.Ok();

});

app.Run();7.8 DI and Configuration (WebCrawler.Worker/Program.cs – Similar for Coordinator)

// Additional: Serilog

builder.Host.UseSerilog((ctx, lc) => lc

.WriteTo.Console()

.Enrich.FromLogContext());

// Metrics (Prometheus)

builder.Services.AddOpenTelemetry().WithMetrics(builder => builder.AddPrometheusExporter());8. Scaling & Optimization Summary

| Component | Technology | Scaling Strategy | Optimization Notes |

|---|---|---|---|

| Coordinator | Apache Mesos/YARN | Single active master; leader election | Stateless config; HA via ZooKeeper |

| URL Frontier | Kafka (Partitioned) | Add brokers; sharding by domain | Compression; consumer groups |

| Fetchers | Kubernetes Pods (Async) | Auto-scale on CPU/queue depth | Connection pooling; gzip requests |

| Parser/Dedup | Beam/Spark Workers | Horizontal pods; batch processing | SIMD for hashing; GPU for JS rendering |

| Storage | S3 + Elasticsearch | Sharded indices; replication | TTL on raw data; columnar compression |

| Monitoring | Prometheus + Grafana | Federated metrics | SLOs: 95% fetches <5s |

9. Challenges and Mitigations

- Infinite Loops/Spam: Mitigate with outlink limits (max 100/page) and domain blacklists.

- Legal/Ethical: Integrate robots.txt enforcement and opt-out lists (e.g., DMOZ-style).

- Resource Contention: Domain-based partitioning prevents overload; use proxies/VPNs for IP rotation.

- Cost: Focus 80% effort on high-value domains; use spot instances for workers.

10. Deployment and Testing

- Docker/K8s: Each service in separate deployments; HPA on CPU >70%.

- Testing: xUnit for unit tests; Integration tests with TestContainers for Kafka/Redis.

- Performance: Async everywhere; Expect 500–1000 pages/min per worker pod.

This implementation is production-ready, with observability, resilience, and modularity. Extend with JS rendering (PuppeteerSharp) for dynamic sites. Deploy via Helm charts for orchestration.