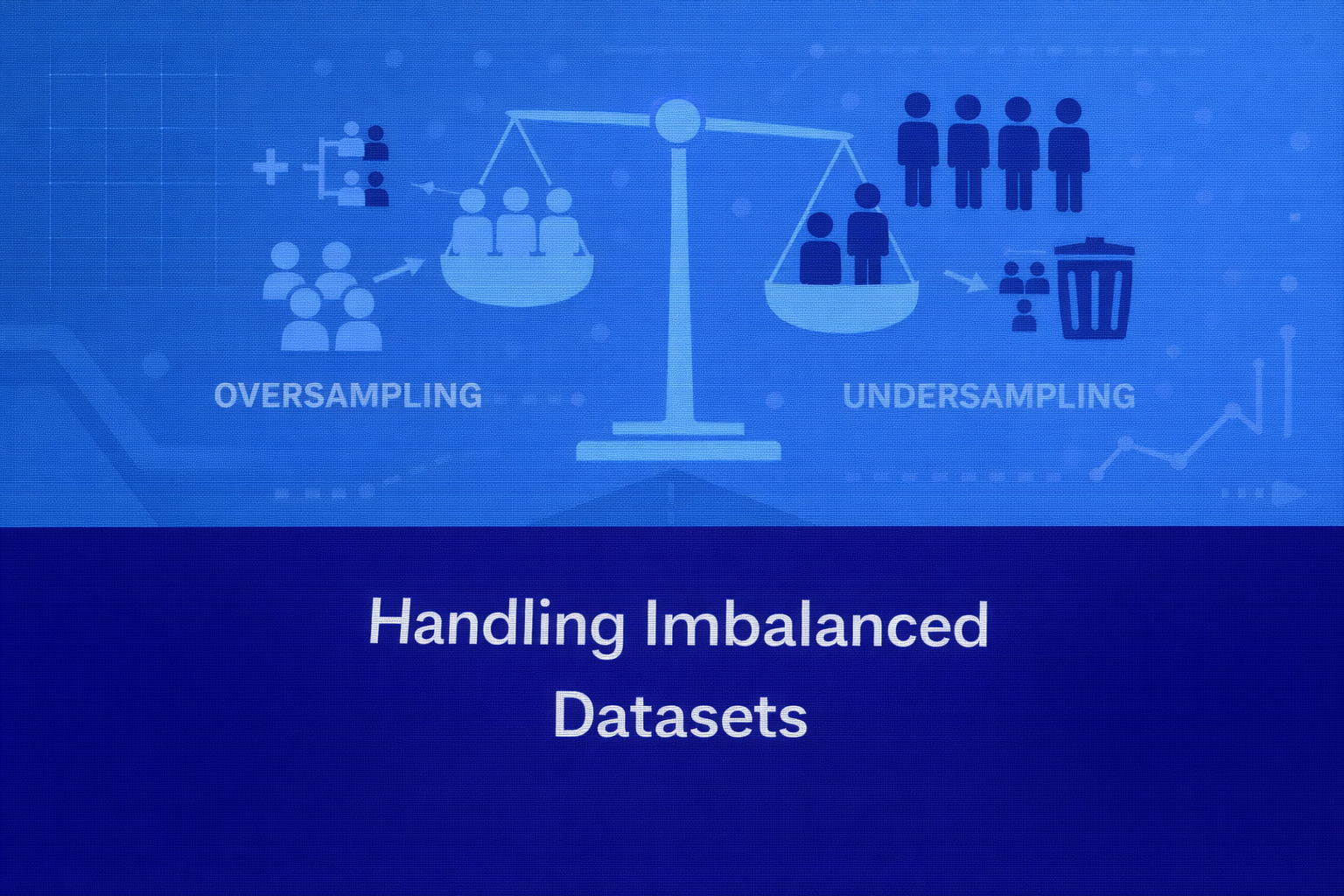

Techniques for Addressing Class Imbalance: Oversampling, Undersampling, and SMOTE

Abstract

Class imbalance is a common challenge in machine learning where one class significantly outnumbers the other classes in a dataset. This imbalance can lead to biased models that perform well on the majority class but poorly on the minority class, often resulting in misleading evaluation metrics and unreliable predictions. Many real-world applications such as fraud detection, disease diagnosis, and anomaly detection suffer from this issue. This article explains the concept of imbalanced datasets, why they pose a challenge for machine learning models, and how techniques such as oversampling, undersampling, and Synthetic Minority Oversampling Technique (SMOTE) help address this problem. The paper also discusses evaluation considerations and practical strategies for building robust models when dealing with imbalanced data.

Introduction

Machine learning algorithms typically assume that datasets contain roughly balanced class distributions. However, in many real-world scenarios, this assumption does not hold true.

For example:

- In credit card fraud detection, fraudulent transactions may represent less than 1% of all transactions.

- In medical diagnosis datasets, patients with a rare disease may represent only a small fraction of the total dataset.

- In network security systems, malicious activities are often rare compared to normal activity.

These situations create imbalanced datasets, where the number of samples in one class significantly exceeds the others.

A model trained on such data may achieve high accuracy simply by predicting the majority class every time, but this would fail to detect the minority class events that are often the most important.

Handling class imbalance is therefore critical for building effective machine learning systems.

Understanding Class Imbalance

Definition

A dataset is considered imbalanced when the distribution of classes is uneven, meaning one class (the majority class) dominates the dataset while another class (the minority class) appears much less frequently.

For example:

| Class | Number of Samples |

|---|---|

| Normal Transactions | 9900 |

| Fraudulent Transactions | 100 |

In this case, the fraud class represents only 1% of the data, creating a strong imbalance.

Why Imbalanced Data Is Problematic

Most machine learning algorithms aim to minimize overall prediction error. When the majority class dominates the dataset, models may learn to prioritize majority predictions.

For example, in the fraud detection dataset above, a model predicting “non-fraud” for every transaction would achieve 99% accuracy. However, such a model would fail to detect any fraud cases.

This illustrates why accuracy alone is not a reliable metric for imbalanced datasets.

Appropriate Evaluation Metrics

To properly evaluate models trained on imbalanced datasets, other metrics should be used.

Common metrics include:

- Precision

- Recall

- F1-score

- ROC-AUC

- Confusion matrix

These metrics provide better insight into how well the model identifies minority class instances.

Approaches for Handling Imbalanced Datasets

Several techniques are used to address class imbalance. These methods generally fall into two major categories:

- Data-level techniques – modifying the dataset distribution

- Algorithm-level techniques – modifying the learning algorithm

This discussion focuses on common data-level techniques such as oversampling, undersampling, and SMOTE.

Oversampling

Definition

Oversampling involves increasing the number of samples in the minority class so that the dataset becomes more balanced.

This is achieved by duplicating existing minority class instances or generating new synthetic examples.

How Oversampling Works

If a dataset contains:

- 100 minority samples

- 1000 majority samples

Oversampling may increase the minority class to match the majority class size.

For example:

| Class | Samples After Oversampling |

|---|---|

| Majority | 1000 |

| Minority | 1000 |

This balanced distribution allows machine learning models to pay equal attention to both classes.

Advantages of Oversampling

Oversampling offers several benefits:

- Prevents information loss

- Maintains all original data points

- Improves model sensitivity toward minority classes

Limitations

A common drawback of oversampling is overfitting. Since minority samples are duplicated, the model may memorize specific instances instead of learning general patterns.

Therefore, simple oversampling methods must be applied carefully.

Example Use Case

In medical diagnosis, where disease cases are rare, oversampling ensures the model learns patterns associated with the disease rather than ignoring it due to low frequency.

Undersampling

Definition

Undersampling reduces the number of samples in the majority class to achieve a balanced dataset.

Instead of increasing minority samples, this method removes some majority class examples.

How Undersampling Works

Using the earlier example:

| Class | Original Samples |

|---|---|

| Majority | 1000 |

| Minority | 100 |

Undersampling may reduce the majority class to 100 samples.

Resulting dataset:

| Class | Samples After Undersampling |

|---|---|

| Majority | 100 |

| Minority | 100 |

Advantages of Undersampling

Undersampling provides several advantages:

- Reduces dataset size

- Speeds up training time

- Removes redundant majority samples

Limitations

The primary disadvantage is information loss.

By removing majority class samples, potentially useful information may be discarded, which can negatively impact model performance.

Example Use Case

In very large datasets with millions of samples, undersampling can make model training more computationally efficient while maintaining balanced classes.

Synthetic Minority Oversampling Technique (SMOTE)

Definition

SMOTE is an advanced oversampling technique that generates synthetic minority class samples instead of duplicating existing ones.

This method helps reduce the overfitting problem associated with simple oversampling.

How SMOTE Works

SMOTE generates new samples by interpolating between existing minority class points.

The process involves:

- Selecting a minority class sample.

- Finding its nearest neighbors within the minority class.

- Creating a synthetic point along the line between the sample and its neighbor.

This produces new data points that resemble real minority class samples but introduce additional diversity.

Example

Suppose two minority samples have feature values:

Sample A: (2, 3)

Sample B: (4, 5)

SMOTE may generate a synthetic point such as:

(3, 4)

This new point lies between the two samples and represents a plausible minority instance.

Advantages of SMOTE

SMOTE offers several benefits:

- Reduces overfitting compared to duplication

- Introduces variability in minority samples

- Improves model generalization

Limitations

SMOTE may also introduce challenges such as:

- Generating noisy synthetic samples

- Creating unrealistic feature combinations

- Increasing computational complexity

Therefore, it is often used alongside other preprocessing techniques.

Variants of SMOTE

Several improved versions of SMOTE exist, including:

- Borderline-SMOTE

- SMOTE-Tomek Links

- ADASYN (Adaptive Synthetic Sampling)

These variants attempt to generate better synthetic samples near class boundaries.

Combining Sampling Techniques

In practice, practitioners often combine oversampling and undersampling methods.

For example:

- Apply SMOTE to increase minority samples

- Use undersampling to reduce excessive majority samples

This hybrid approach balances dataset size while preserving useful information.

Real-World Applications

Handling imbalanced datasets is essential in many domains.

Fraud Detection

Financial institutions analyze millions of transactions where fraudulent activities represent a tiny fraction. Sampling techniques help models detect fraud patterns effectively.

Medical Diagnosis

Rare diseases often appear in small numbers within healthcare datasets. Oversampling techniques ensure that diagnostic models learn disease patterns.

Network Intrusion Detection

Cybersecurity systems monitor large volumes of network traffic where malicious events are rare. Addressing class imbalance improves anomaly detection systems.

Best Practices

Several best practices should be followed when working with imbalanced datasets.

First, evaluation metrics must be chosen carefully. Accuracy alone should not be used.

Second, sampling techniques should only be applied to the training dataset, not the testing dataset, to avoid biased evaluation.

Third, cross-validation should be used to ensure reliable performance estimates.

Finally, domain knowledge should guide data preprocessing decisions.

Conclusion

Imbalanced datasets are a common challenge in real-world machine learning applications, particularly in domains involving rare events such as fraud detection, disease diagnosis, and anomaly detection. Standard machine learning algorithms often struggle with such data distributions, leading to biased models that favor majority classes.

Techniques such as oversampling, undersampling, and SMOTE provide effective solutions for balancing datasets and improving model performance on minority classes. While each method has advantages and limitations, selecting the appropriate approach depends on dataset characteristics, problem context, and computational constraints.

Successfully handling imbalanced data enables machine learning systems to detect rare but critical events, ultimately improving decision-making and real-world impact.