Strategies for Creating and Selecting Features to Enhance Model Performance

Abstract

Feature engineering is one of the most critical steps in the machine learning pipeline. It involves transforming raw data into meaningful input variables, or features, that improve a model’s ability to learn patterns and make accurate predictions. While advanced algorithms receive significant attention, many successful machine learning systems depend more on well-designed features than on complex models. Effective feature engineering can significantly improve model performance, reduce overfitting, and enhance interpretability. This article explains the principles, techniques, and best practices for creating and selecting features that strengthen machine learning models.

Introduction

Machine learning models learn patterns from data by analyzing features. A feature is an individual measurable property or characteristic of a dataset used as input for a model.

For example, in a house price prediction system, possible features might include:

- Size of the house

- Number of bedrooms

- Location

- Age of the property

- Distance to nearby facilities

These features help the model understand relationships between inputs and outputs.

However, raw data often contains noise, inconsistencies, and irrelevant variables. Feature engineering transforms raw data into a structured format that machine learning algorithms can effectively use.

In many practical machine learning projects, the quality of features plays a more important role than the complexity of the algorithm.

Feature engineering typically involves two major activities:

- Feature creation

- Feature selection

Both processes help improve predictive performance and model generalization.

Understanding Feature Engineering

Feature engineering refers to the process of creating, transforming, and selecting variables that enable machine learning models to learn meaningful patterns from data.

The goal is to represent the underlying problem in a way that makes it easier for algorithms to detect relationships.

Feature engineering can include:

- Creating new variables

- Transforming existing variables

- Encoding categorical values

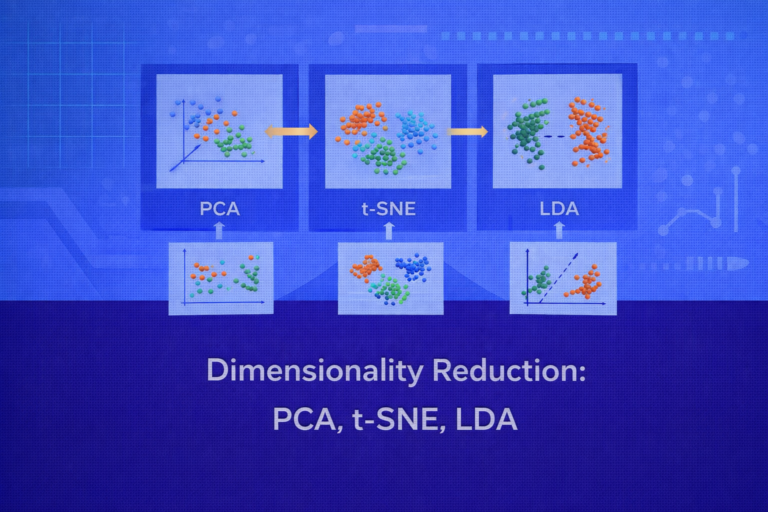

- Reducing dimensionality

- Selecting the most important features

These steps help improve the signal-to-noise ratio in datasets.

Importance of Feature Engineering

Feature engineering influences machine learning models in several ways.

First, it improves model accuracy by providing relevant information that algorithms can use for learning.

Second, it reduces model complexity by eliminating irrelevant or redundant features.

Third, it enhances model interpretability by using meaningful variables that are easier for humans to understand.

Fourth, it helps prevent overfitting by removing noise and unnecessary variables.

In many real-world machine learning competitions and applications, well-designed features are the key factor that differentiates high-performing models from mediocre ones.

Feature Creation Techniques

Feature creation involves generating new features from existing data.

These features often reveal hidden relationships that are not directly visible in the raw data.

Domain-Based Feature Creation

One of the most effective methods for feature creation involves leveraging domain knowledge.

Domain experts understand which factors influence outcomes in specific fields.

For example, in healthcare analytics, features such as patient age, lifestyle habits, and family medical history may significantly influence disease prediction models.

In financial systems, credit utilization ratio or transaction frequency may be important predictors of financial risk.

Domain knowledge helps create features that capture meaningful relationships in data.

Mathematical Transformations

Mathematical transformations convert raw features into more useful forms.

Common transformations include:

- Logarithmic transformations

- Square root transformations

- Polynomial features

- Ratios and differences

For example, instead of using raw income values, a model may perform better using a log-transformed income feature, especially when income distribution is highly skewed.

Polynomial features can also capture nonlinear relationships between variables.

Aggregation Features

Aggregation combines multiple data points into summary statistics.

Examples include:

- Average transaction amount per user

- Total purchases in the past month

- Maximum temperature in a day

- Number of visits in the last week

Aggregation features are particularly useful for time-series and behavioral datasets.

For example, in fraud detection systems, the number of transactions made within a short time window may indicate suspicious activity.

Time-Based Features

Time-related features are extremely valuable in many machine learning systems.

Examples include:

- Day of the week

- Month of the year

- Time since last event

- Hour of transaction

- Seasonal indicators

For instance, online shopping patterns often vary during weekends or holiday seasons.

Capturing temporal patterns helps models learn behavior trends.

Interaction Features

Interaction features capture relationships between multiple variables.

For example:

House price prediction may benefit from combining:

- House size

- Location quality

A feature such as price per square foot in a specific location may be more informative than either variable alone.

These interaction terms help models learn complex relationships.

Feature Transformation

Feature transformation modifies existing features to improve model learning.

Scaling and Normalization

Many machine learning algorithms perform better when features are on similar scales.

Common scaling techniques include:

- Min-Max scaling

- Standardization (z-score normalization)

- Robust scaling

For example, if one feature ranges between 0 and 1 while another ranges between 0 and 10,000, algorithms may give disproportionate importance to larger values.

Scaling ensures fair representation of all variables.

Encoding Categorical Variables

Machine learning algorithms typically require numerical inputs. Therefore, categorical variables must be encoded into numerical representations.

Common encoding techniques include:

- One-hot encoding

- Label encoding

- Target encoding

For example, a categorical feature representing cities might be transformed into binary indicator variables.

Encoding methods must be chosen carefully to avoid introducing unintended relationships.

Text Feature Extraction

When dealing with text data, raw text must be converted into numerical representations.

Common approaches include:

- Bag-of-words

- TF-IDF

- Word embeddings

- Sentence embeddings

These techniques convert textual content into vectors that machine learning models can process.

Image Feature Extraction

In computer vision systems, images must be transformed into numerical features.

Techniques include:

- Pixel-based features

- Edge detection

- Convolutional neural network feature extraction

Modern deep learning models automatically learn features from images during training.

Feature Selection Techniques

Feature selection identifies the most important variables while removing irrelevant or redundant features.

This process improves model performance and reduces computational complexity.

Feature selection techniques generally fall into three categories.

Filter Methods

Filter methods evaluate features based on statistical measures before training the model.

Examples include:

- Correlation analysis

- Mutual information

- Chi-square tests

- Variance threshold

These methods quickly identify irrelevant features without requiring model training.

Wrapper Methods

Wrapper methods evaluate subsets of features by training models and measuring performance.

Examples include:

- Forward selection

- Backward elimination

- Recursive feature elimination

These methods can produce highly optimized feature sets but may require more computational resources.

Embedded Methods

Embedded methods perform feature selection during model training.

Examples include:

- Lasso regression

- Decision tree feature importance

- Gradient boosting importance scores

These techniques integrate feature selection directly into the learning process.

Avoiding Common Feature Engineering Mistakes

Despite its importance, feature engineering can introduce errors if not performed carefully.

One common mistake is data leakage, where information from the test dataset inadvertently influences training features.

For example, using future information when predicting past events can lead to unrealistic model performance.

Another mistake is creating too many features without considering relevance, which may increase noise and lead to overfitting.

Feature engineering should focus on meaningful transformations rather than excessive feature generation.

Automation in Feature Engineering

Modern machine learning platforms increasingly support automated feature engineering.

AutoML systems can automatically generate and evaluate features using algorithms designed to identify useful transformations.

Tools such as feature stores also help manage reusable features across different machine learning models.

However, human insight and domain expertise remain essential for creating meaningful features.

Real-World Example

Consider an e-commerce system designed to predict whether a customer will make a purchase.

Raw data may include:

- User browsing history

- Time spent on product pages

- Past purchase history

- Product categories viewed

Feature engineering might produce new variables such as:

- Average session duration

- Number of product views per visit

- Time since last purchase

- Ratio of purchases to visits

These engineered features capture customer behavior patterns more effectively than raw data alone.

Best Practices for Feature Engineering

Successful feature engineering follows several best practices.

First, always begin with exploratory data analysis to understand relationships within the dataset.

Second, use domain knowledge to design meaningful features.

Third, avoid unnecessary complexity and focus on features that improve model performance.

Fourth, validate features using cross-validation techniques.

Finally, document feature transformations to ensure reproducibility in production systems.

Conclusion

Feature engineering is a fundamental component of successful machine learning systems. By transforming raw data into meaningful and informative features, practitioners enable algorithms to learn patterns more effectively.

Effective feature engineering involves creating new variables, transforming existing ones, and selecting the most relevant features for modeling. Techniques such as mathematical transformations, aggregation, interaction features, and dimensionality reduction help reveal hidden patterns in data.

Although modern machine learning frameworks increasingly automate feature extraction, domain expertise and careful feature design remain critical to achieving high-performing models. Organizations that prioritize thoughtful feature engineering often achieve significant improvements in model accuracy, reliability, and interpretability.