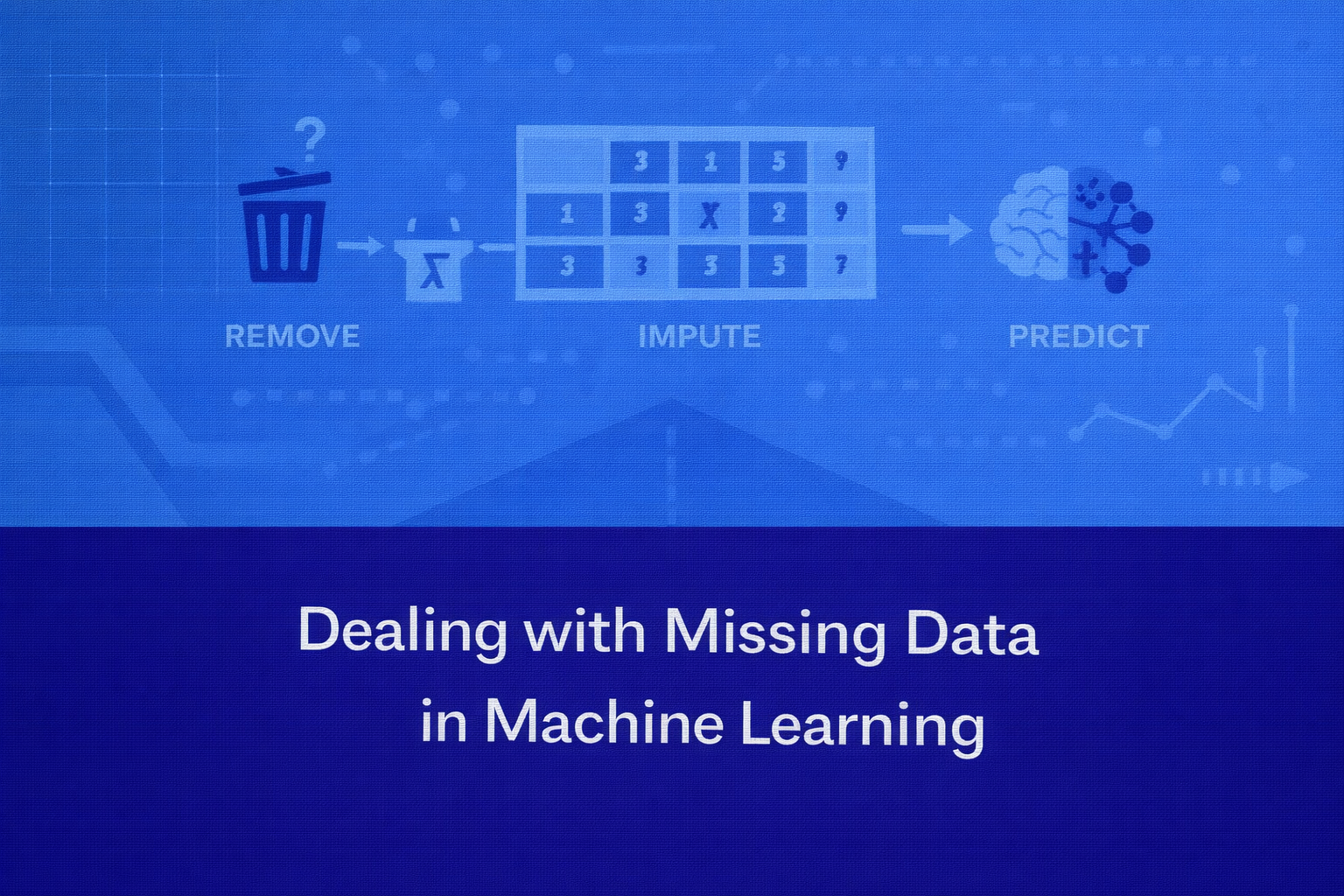

Imputation Methods and Strategies for Handling Incomplete Datasets

Abstract

Real-world datasets are rarely complete. Missing values occur frequently due to errors in data collection, sensor failures, incomplete surveys, system migrations, or data corruption. If not handled properly, missing data can significantly affect machine learning models by introducing bias, reducing statistical power, and degrading model performance. Handling missing data is therefore a critical step in data preprocessing. This article explains the causes and types of missing data and discusses various strategies for addressing them, including deletion techniques and imputation methods such as mean, median, mode, and model-based imputation. It also highlights best practices for integrating missing data handling into machine learning pipelines.

Introduction

Machine learning models rely on high-quality data to learn meaningful patterns. However, real-world data often contains missing or incomplete values.

For example, in a customer dataset:

- Some customers may not report their income.

- Sensor readings may fail during certain time periods.

- Survey participants may skip specific questions.

These missing values create gaps in the dataset that machine learning algorithms may not handle effectively.

Many algorithms require complete datasets and may fail or produce unreliable predictions when missing values are present. Even algorithms that tolerate missing values may experience reduced performance if missing data is not handled carefully.

Therefore, dealing with missing data is an essential preprocessing step before model training.

Why Missing Data Is a Problem

Missing values can affect machine learning models in several ways.

First, they reduce the amount of available information for training models.

Second, missing data can introduce bias if the missing values follow a systematic pattern.

Third, some algorithms cannot process datasets with missing entries and require complete numerical input.

Finally, missing values may distort statistical distributions used in feature engineering and model training.

Proper handling of missing data ensures that machine learning models remain accurate and reliable.

Types of Missing Data

Understanding why data is missing helps determine the best strategy for handling it.

Missing data is typically classified into three categories.

Missing Completely at Random (MCAR)

Data is considered missing completely at random when the probability of a value being missing is unrelated to any other data in the dataset.

For example, a sensor may randomly fail to record a measurement due to temporary hardware malfunction.

In this case, missing values occur randomly and do not introduce systematic bias.

Missing at Random (MAR)

Missing at random occurs when the missingness depends on other observed variables but not on the missing value itself.

For example, higher-income individuals may choose not to report their income in a survey. In this case, the missing values depend on other characteristics such as occupation or education level.

Missing Not at Random (MNAR)

Missing not at random occurs when the missingness is related to the value itself.

For instance, individuals with extremely high or low income may deliberately avoid reporting their income.

Handling MNAR data is more complex because the missing values may introduce systematic bias.

Understanding these categories helps determine appropriate strategies for handling missing data.

Basic Strategies for Handling Missing Data

Several approaches exist for dealing with missing values. These methods generally fall into two categories:

- Removing missing data

- Imputing missing data

The choice depends on the dataset size, the proportion of missing values, and the importance of the affected features.

Deletion Methods

Listwise Deletion

Listwise deletion removes any rows containing missing values.

For example, if a dataset has missing values in certain rows, those rows are completely removed from the dataset.

This method is simple and easy to implement.

Advantages include:

- Simplicity

- No assumptions about missing values

However, listwise deletion may lead to significant data loss if many records contain missing values.

It is typically suitable only when the percentage of missing data is very small.

Column Removal

If a feature contains a very large number of missing values, it may be removed entirely from the dataset.

For example, if a column contains more than 70 percent missing values, it may not provide enough useful information for modeling.

Removing such features simplifies the dataset and prevents unnecessary complexity.

However, important information may be lost if the removed feature contains valuable predictive signals.

Imputation Methods

Imputation refers to the process of replacing missing values with estimated values.

This allows models to use the dataset without losing observations.

Several imputation methods are commonly used in machine learning.

Mean Imputation

Mean imputation replaces missing numerical values with the average value of the feature.

For example, if the average age in a dataset is 35 and some entries are missing, the missing values may be replaced with 35.

Advantages of mean imputation include:

- Simplicity

- Fast implementation

- Preservation of dataset size

However, mean imputation can reduce data variability and distort statistical relationships between features.

Median Imputation

Median imputation replaces missing values with the median value of the feature.

This method is often preferred when the data contains outliers.

For example, income data often contains extreme values. In such cases, the median provides a more robust estimate than the mean.

Median imputation preserves central tendencies while reducing the influence of outliers.

Mode Imputation

Mode imputation is used for categorical variables.

It replaces missing values with the most frequently occurring category.

For example, if a feature representing marital status contains missing values, the most common category may be used as a replacement.

This method works well for categorical datasets but may introduce bias if one category dominates the dataset.

K-Nearest Neighbor Imputation

K-nearest neighbor (KNN) imputation estimates missing values based on similar data points.

The algorithm identifies the nearest neighbors of a data point with missing values and uses their values to estimate the missing entry.

For example, if a customer’s income is missing, the algorithm may estimate it using the income values of similar customers with comparable attributes.

KNN imputation can produce more accurate estimates than simple statistical methods but requires additional computational resources.

Regression-Based Imputation

Regression imputation predicts missing values using other variables in the dataset.

For example, missing income values may be predicted using variables such as education level, job title, and location.

A regression model is trained on complete cases and then used to estimate missing values.

This approach captures relationships between variables but may introduce bias if the model is not accurate.

Multiple Imputation

Multiple imputation generates several possible values for each missing entry and combines them to create a final estimate.

This method accounts for uncertainty in the imputation process and produces more statistically reliable results.

Multiple imputation is commonly used in research studies and complex statistical analyses.

However, it requires more computational effort than simpler methods.

Using Machine Learning for Imputation

Advanced techniques use machine learning models to estimate missing values.

Algorithms such as random forests and gradient boosting can be trained to predict missing entries based on patterns in the dataset.

These approaches often produce better estimates because they capture nonlinear relationships between variables.

However, they require additional computational resources and careful validation.

Choosing the Right Strategy

The choice of missing data strategy depends on several factors.

Important considerations include:

- Percentage of missing values

- Importance of the feature

- Data distribution

- Computational resources

- Model requirements

For example:

- Small amounts of missing data may be handled using simple imputation.

- Large missing sections may require advanced imputation techniques.

- Features with excessive missing values may be removed.

There is no universal solution, and the appropriate method depends on the specific dataset.

Best Practices for Handling Missing Data

Several best practices help ensure reliable handling of missing values.

First, always analyze the pattern of missing data before applying imputation.

Second, avoid blindly replacing missing values without understanding their causes.

Third, apply imputation techniques only on the training dataset to prevent data leakage.

Fourth, evaluate model performance before and after imputation to ensure improvements.

Finally, document preprocessing steps to maintain reproducibility in machine learning pipelines.

Real-World Example

Consider a healthcare dataset used to predict patient readmission.

Features may include:

- patient age

- blood pressure

- cholesterol level

- medication history

If cholesterol values are missing for some patients, the dataset may be incomplete.

Possible strategies include:

- imputing missing values using median cholesterol levels

- predicting missing values using regression models

- removing records if missing data is minimal

Selecting the appropriate method ensures that the model learns from as much reliable data as possible.

Conclusion

Missing data is a common challenge in real-world machine learning datasets. Ignoring missing values can lead to inaccurate models, biased predictions, and reduced analytical reliability.

Techniques such as deletion, statistical imputation, and model-based imputation provide effective ways to address missing data while preserving valuable information. Methods such as mean, median, mode, KNN, and regression imputation allow datasets to remain usable without discarding large portions of data.

Successfully handling missing data requires understanding the nature of the missing values, selecting appropriate imputation methods, and carefully integrating these techniques into the machine learning pipeline. By applying thoughtful preprocessing strategies, machine learning practitioners can ensure that incomplete datasets still produce robust and reliable predictive models.