Scaling Techniques for Preparing Data for Algorithms Sensitive to Feature Magnitudes

Abstract

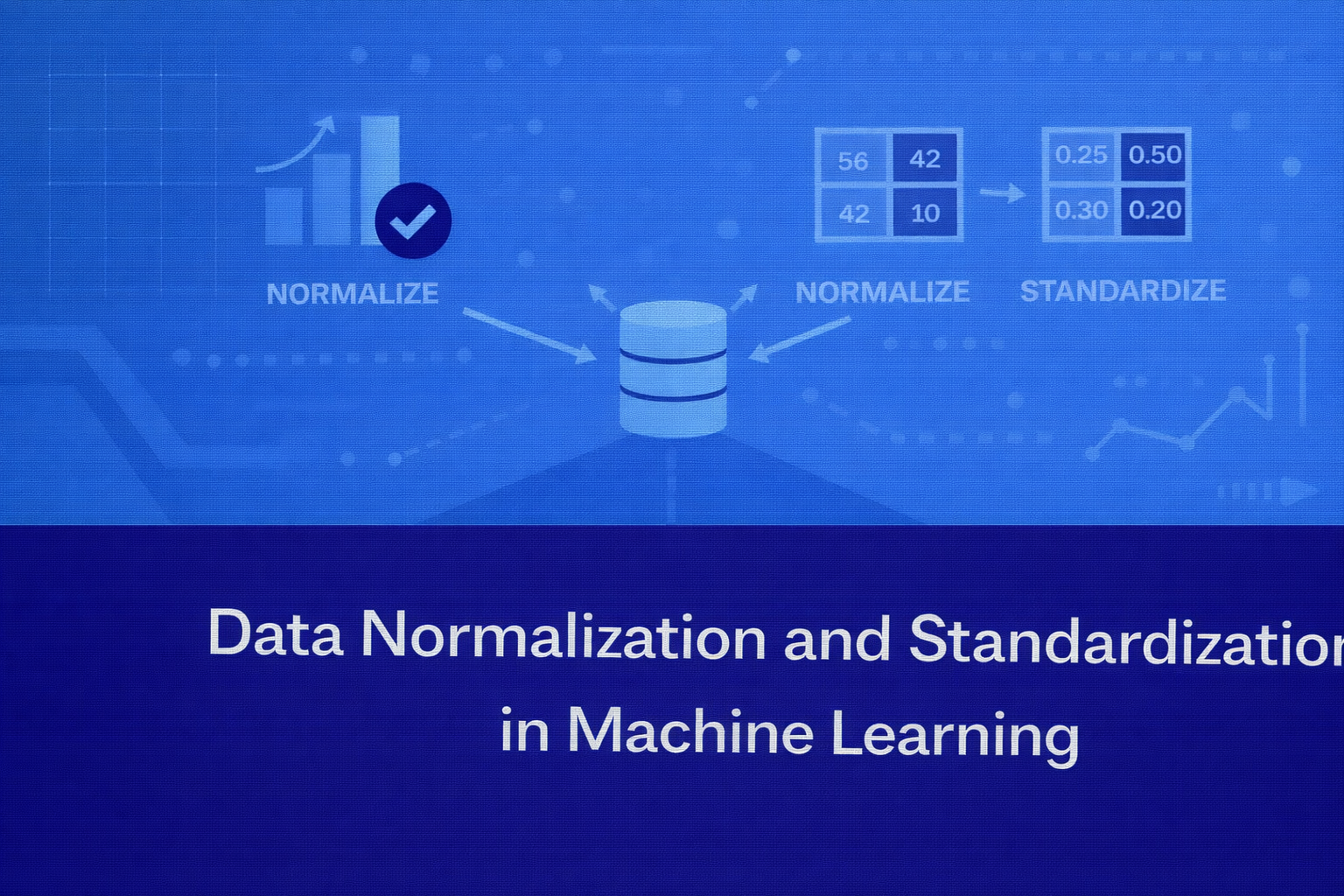

Data preprocessing is a critical step in the machine learning workflow, and feature scaling plays a key role in ensuring that models learn effectively from data. Many machine learning algorithms are sensitive to the magnitude of input features, meaning that features with larger numerical values can dominate those with smaller values. Data normalization and standardization are two widely used techniques that scale numerical features into comparable ranges, allowing algorithms to learn more efficiently. This article provides a detailed explanation of normalization and standardization, their mathematical foundations, their role in machine learning pipelines, and best practices for applying these techniques in real-world applications.

Introduction

Machine learning algorithms learn patterns from numerical features. However, real-world datasets often contain variables that exist on vastly different scales.

For example, consider a dataset containing the following features:

- Age: values ranging from 18 to 70

- Annual income: values ranging from 20,000 to 150,000

- Number of purchases: values ranging from 1 to 50

These differences in magnitude can cause problems for many machine learning algorithms because features with larger numeric values may disproportionately influence the learning process.

To address this issue, data preprocessing techniques such as normalization and standardization are used to scale features to a consistent range.

Feature scaling helps machine learning algorithms:

- converge faster during training

- avoid bias toward large magnitude variables

- improve numerical stability

- produce more reliable predictions

Understanding when and how to apply normalization and standardization is an essential skill for machine learning practitioners.

Why Feature Scaling Is Important

Many machine learning algorithms rely on distance calculations or gradient optimization. These algorithms assume that input features are comparable in scale.

If one feature has much larger values than another, the model may assign disproportionate importance to that feature.

Algorithms particularly sensitive to feature scaling include:

- k-nearest neighbors (KNN)

- support vector machines (SVM)

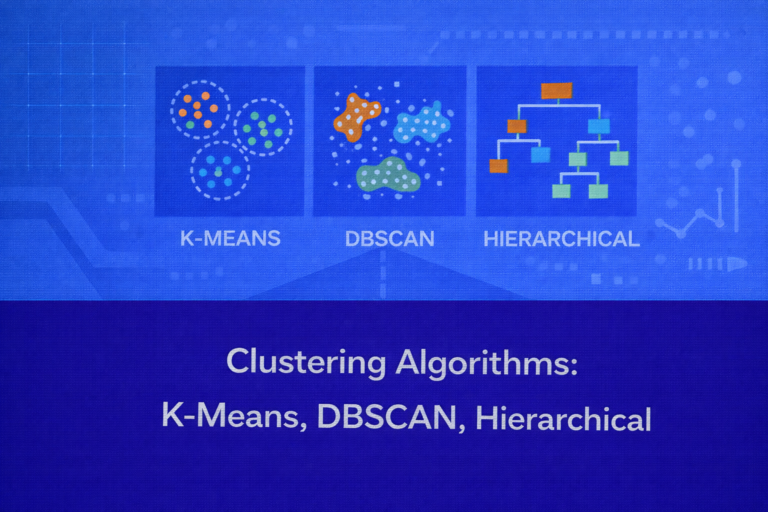

- k-means clustering

- gradient descent–based models

- neural networks

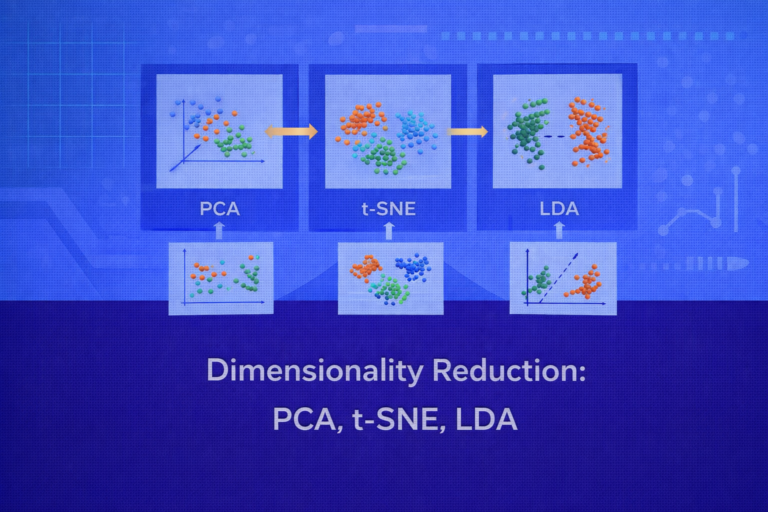

- principal component analysis (PCA)

For example, in KNN, distance between data points is calculated using metrics such as Euclidean distance. If one feature has a much larger numeric range, it will dominate the distance calculation.

Feature scaling ensures that each feature contributes proportionally to the model.

Data Normalization

Definition

Normalization is a scaling technique that transforms numerical values into a fixed range, typically between 0 and 1.

This process ensures that all features share the same scale regardless of their original magnitudes.

Mathematical Formula

Normalization using Min-Max scaling is calculated as:

Normalized Value = (X − X_min) / (X_max − X_min)

Where:

- X is the original value

- X_min is the minimum value of the feature

- X_max is the maximum value of the feature

This transformation converts all values into the interval between 0 and 1.

Example

Suppose a dataset contains ages ranging from 20 to 60.

If a value of 40 is normalized:

Normalized Value = (40 − 20) / (60 − 20)

Normalized Value = 20 / 40

Normalized Value = 0.5

Thus, the age value of 40 becomes 0.5 after normalization.

Advantages of Normalization

Normalization provides several benefits:

- Ensures all features share the same scale

- Prevents features with larger ranges from dominating the model

- Improves performance of distance-based algorithms

- Helps neural networks converge faster

Normalization is particularly useful when the data does not follow a normal distribution.

Limitations of Normalization

Normalization is sensitive to outliers. If a dataset contains extremely large or small values, the entire scale may be distorted.

For example, if income values include an outlier such as one extremely high salary, most normalized values may become compressed near zero.

Because of this limitation, normalization is not always ideal for datasets with significant outliers.

Data Standardization

Definition

Standardization transforms data so that it has a mean of zero and a standard deviation of one.

This scaling method converts values into a distribution centered around zero.

Standardization is commonly used when algorithms assume normally distributed input features.

Mathematical Formula

Standardization uses the Z-score formula:

Standardized Value = (X − μ) / σ

Where:

- X is the original value

- μ is the mean of the feature

- σ is the standard deviation of the feature

This transformation measures how far a value lies from the mean in terms of standard deviations.

Example

Suppose the mean income of a dataset is 50,000 and the standard deviation is 10,000.

If an individual earns 70,000:

Standardized Value = (70,000 − 50,000) / 10,000

Standardized Value = 2

This means the income is two standard deviations above the mean.

Advantages of Standardization

Standardization offers several benefits:

- Works well with normally distributed data

- Less sensitive to outliers than normalization

- Suitable for many machine learning algorithms

- Preserves relationships between data points

Because of these advantages, standardization is often the default scaling method in machine learning pipelines.

Limitations of Standardization

Although standardization reduces sensitivity to outliers, extreme values can still influence the mean and standard deviation.

Additionally, standardized values are not bounded within a fixed range, which may not be ideal for some applications.

Normalization vs Standardization

Both normalization and standardization serve the purpose of scaling features, but they differ in their approach and use cases.

Normalization rescales data into a fixed range, usually between 0 and 1. Standardization transforms data so that it follows a standard normal distribution with mean zero and standard deviation one.

Normalization is often used when feature values must remain within a specific range, such as in neural networks that use activation functions sensitive to input magnitude.

Standardization is commonly preferred when data follows a normal distribution or when algorithms rely on statistical assumptions about feature distributions.

Choosing between these techniques depends on the nature of the dataset and the algorithm being used.

Scaling in Machine Learning Pipelines

Feature scaling should be applied during the preprocessing stage of the machine learning pipeline.

The typical process includes:

- Splitting the dataset into training and testing sets

- Calculating scaling parameters from the training dataset

- Applying the same transformation to both training and testing data

It is important that scaling parameters such as mean, standard deviation, minimum, and maximum values are calculated only on the training data.

Using the entire dataset during scaling may lead to data leakage, which can artificially inflate model performance.

Modern machine learning frameworks provide built-in tools for scaling, allowing preprocessing steps to be integrated into automated pipelines.

Real-World Example

Consider a customer segmentation model using the following features:

- annual income

- number of transactions

- average purchase value

Income may range from thousands to hundreds of thousands, while transaction counts may be relatively small.

Without scaling, the model may focus primarily on income because it has the largest magnitude.

By applying normalization or standardization, all features contribute equally, allowing the algorithm to identify meaningful clusters.

Best Practices for Feature Scaling

Several best practices should be followed when applying normalization and standardization.

First, always analyze the data distribution before selecting a scaling method.

Second, apply scaling only to numerical features, as categorical variables require different preprocessing methods.

Third, ensure that scaling parameters are learned from the training dataset and reused for test data.

Fourth, integrate scaling into the machine learning pipeline to maintain consistency during deployment.

Finally, monitor model performance to ensure that scaling improves training stability and prediction accuracy.

Conclusion

Data normalization and standardization are essential preprocessing techniques that ensure machine learning models learn effectively from numerical features. By scaling variables to comparable magnitudes, these methods prevent certain features from dominating the learning process and help algorithms converge more efficiently.

Normalization rescales data into a fixed range, making it useful for algorithms sensitive to bounded inputs. Standardization transforms data into a distribution centered around zero, making it suitable for models that rely on statistical properties.

Choosing the appropriate scaling technique depends on the dataset characteristics and the machine learning algorithm being used. When applied correctly, feature scaling significantly improves model performance, stability, and reliability in real-world machine learning systems.