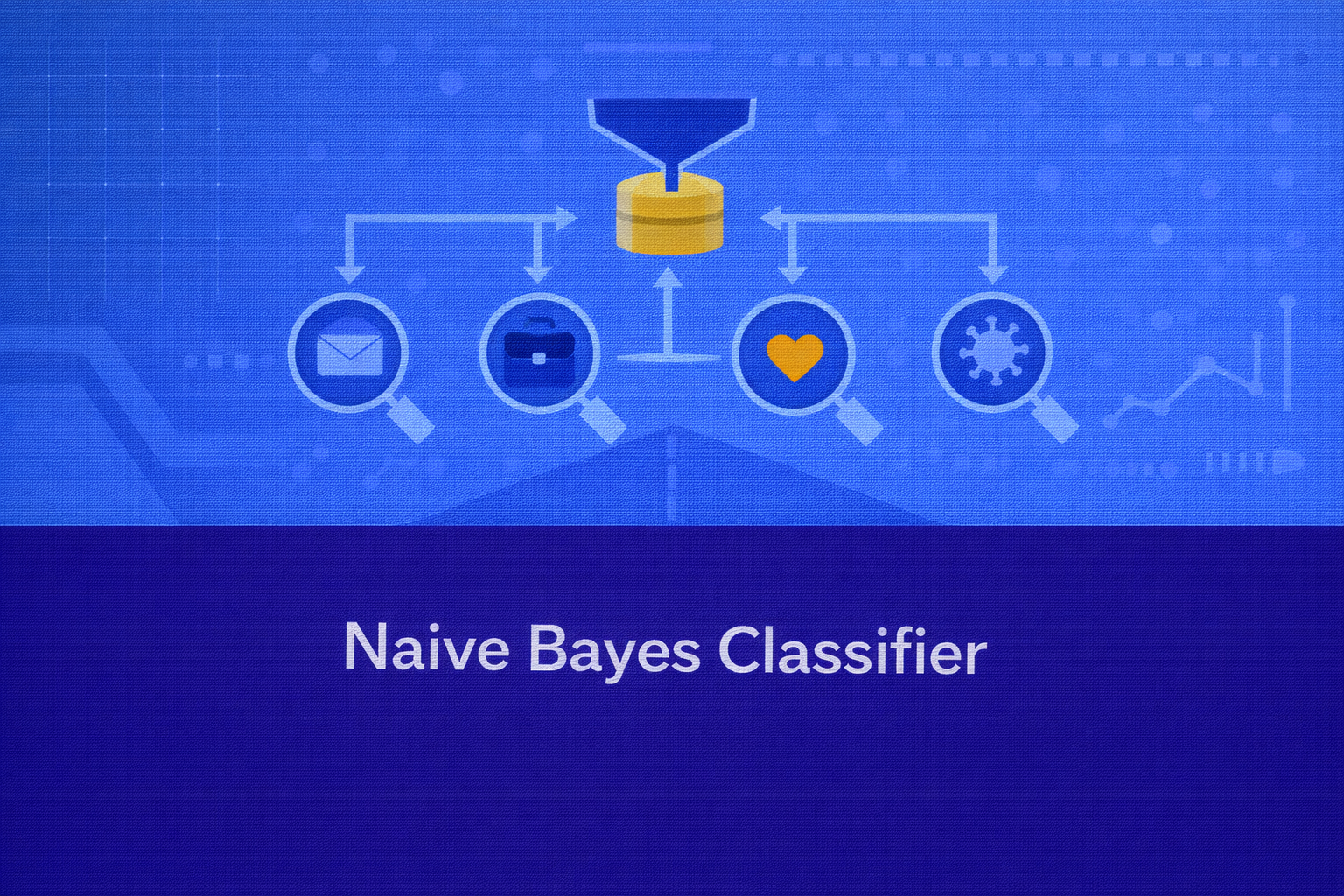

Naive Bayes is a family of probabilistic classifiers based on Bayes’ theorem and a strong conditional independence assumption among features. Despite the simplicity of that assumption, Naive Bayes remains one of the most effective, computationally efficient, and interpretable baseline classifiers in machine learning, especially for text categorization, spam filtering, document labeling, sentiment analysis, and other high-dimensional sparse data problems.

Abstract

This whitepaper presents a detailed technical explanation of the Naive Bayes classifier, covering Bayes’ theorem, probabilistic decision rules, the conditional independence assumption, parameter estimation, the main variants including Gaussian, Multinomial, Bernoulli, and Complement Naive Bayes, smoothing techniques, log-space computation, model evaluation, interpretability, strengths, limitations, and practical implementation details. All formulas are embedded inline in HTML-friendly format for direct use in WordPress or HTML editors.

1. Introduction

Classification problems require assigning an input feature vector x = (x1, x2, ..., xp)

to one of several possible class labels in a set 𝒞 = {c1, c2, ..., cK}.

Naive Bayes approaches this by modeling the posterior probability

P(c | x) for each class and then choosing the class with the largest posterior value.

The classifier is called “Bayes” because it uses Bayes’ theorem, and “naive” because it assumes that once the class

is known, the features become conditionally independent:

P(x1, x2, ..., xp | c) = Πj=1p P(xj | c).

This assumption is often unrealistic in the strict statistical sense, but surprisingly useful in practice.

2. Bayes’ Theorem

The mathematical foundation of Naive Bayes is Bayes’ theorem:

P(c | x) = [P(x | c) P(c)] / P(x).

Here, P(c | x) is the posterior probability of class c given features

x, P(x | c) is the likelihood of observing features

x under class c, P(c) is the prior probability

of the class, and P(x) is the evidence or marginal probability of the observation.

Since P(x) is the same for all classes when comparing class scores for a single input,

classification can be based on proportionality:

P(c | x) ∝ P(x | c) P(c).

The prediction rule therefore becomes

ŷ = argmaxc ∈ 𝒞 P(x | c) P(c).

3. The Naive Conditional Independence Assumption

Directly estimating the full joint likelihood P(x | c) can be extremely difficult because it requires

modeling dependence across all features. Naive Bayes simplifies this by assuming conditional independence:

P(x | c) = P(x1, x2, ..., xp | c) = Πj=1p P(xj | c).

Substituting this into Bayes’ theorem yields the Naive Bayes decision rule:

P(c | x) ∝ P(c) Πj=1p P(xj | c),

and the classifier predicts

ŷ = argmaxc ∈ 𝒞 P(c) Πj=1p P(xj | c).

This factorization dramatically reduces the parameter estimation burden. Instead of estimating a full joint

distribution over p variables, the model estimates one one-dimensional conditional distribution

per feature per class.

4. Decision Rule in Log Space

Multiplying many small probabilities can produce numerical underflow. For this reason, practical Naive Bayes

implementations compute scores in log space. Taking logarithms preserves the ranking because log is monotonic:

log P(c | x) = log P(c) + Σj=1p log P(xj | c) - log P(x).

Since log P(x) is constant across classes for a fixed input, the decision can be made using:

ŷ = argmaxc ∈ 𝒞 [log P(c) + Σj=1p log P(xj | c)].

This form is both numerically stable and computationally convenient.

5. Parameter Estimation

Naive Bayes is a generative classifier: it models how the data is generated within each class and combines this with class priors. Training therefore consists of estimating:

- the prior probability for each class,

P(c) - the class-conditional feature probabilities or densities,

P(xj | c)

The empirical class prior is estimated as

P(c) = Nc / N,

where Nc is the number of training examples in class c

and N is the total number of training examples.

The specific form of P(xj | c) depends on the chosen Naive Bayes variant and the type of data.

6. Gaussian Naive Bayes

Gaussian Naive Bayes is used when features are continuous and assumed to be normally distributed within each class.

For feature xj under class c, the likelihood is modeled as

P(xj | c) = [1 / √(2πσjc2)] exp(-(xj - μjc)2 / (2σjc2)).

Here, μjc is the mean of feature j within class

c, and σjc2 is the corresponding variance.

These are estimated from training data as

μ̂jc = (1 / Nc) Σi:yi=c xij

and

σ̂jc2 = (1 / Nc) Σi:yi=c (xij - μ̂jc)2.

The class score in log form becomes

score(c|x) = log P(c) + Σj=1p [ -0.5 log(2πσjc2) - (xj - μjc)2 / (2σjc2) ].

Gaussian Naive Bayes is often surprisingly strong for continuous tabular data, though it can degrade when the Gaussian assumption is badly violated.

7. Multinomial Naive Bayes

Multinomial Naive Bayes is widely used for count-based features, especially in document classification where each

feature represents the number of times a term appears in a document. If xj is the count of

term j, then within class c the probability of that term is estimated as

θjc = P(term j | c).

Without smoothing, the estimate is

θ̂jc = Tjc / Σl=1p Tlc,

where Tjc is the total count of term j across all training documents

in class c.

For a document vector x = (x1, ..., xp), the multinomial likelihood is proportional to

P(x | c) ∝ Πj=1p θjcxj.

Thus the log-score becomes

score(c|x) = log P(c) + Σj=1p xj log θjc.

This formulation makes Multinomial Naive Bayes extremely effective for bag-of-words, term-frequency, and sometimes TF-IDF-like representations.

8. Bernoulli Naive Bayes

Bernoulli Naive Bayes is designed for binary features, where each feature indicates presence or absence. In text applications, this means the model only considers whether a word appears, not how many times it appears.

For each feature and class, we estimate

θjc = P(xj = 1 | c).

The likelihood for a binary vector becomes

P(x | c) = Πj=1p θjcxj (1 - θjc)1 - xj.

The corresponding log-score is

score(c|x) = log P(c) + Σj=1p [xj log θjc + (1 - xj) log(1 - θjc)].

Bernoulli Naive Bayes can outperform Multinomial Naive Bayes when absence information is important, especially in short texts or binary event features.

9. Complement Naive Bayes

Complement Naive Bayes is a modified version of Multinomial Naive Bayes designed to be more robust in imbalanced text classification settings. Instead of estimating term weights from a class alone, it estimates them from the complement of that class. This reduces bias toward majority classes and can improve performance in skewed datasets.

Its formulation varies by implementation, but the core idea is to use statistics from

all classes except c when estimating the feature contribution for class

c.

10. Laplace and Lidstone Smoothing

A key practical issue in Naive Bayes is zero probability. If a term or feature value never occurs in a class during training, the estimated conditional probability becomes zero. Since Naive Bayes multiplies probabilities, one zero can wipe out the entire class score.

To avoid this, additive smoothing is used. In Multinomial Naive Bayes, Laplace smoothing estimates:

θ̂jc = (Tjc + 1) / (Σl=1p Tlc + p).

More generally, Lidstone smoothing with parameter α > 0 gives

θ̂jc = (Tjc + α) / (Σl=1p Tlc + αp).

When α = 1, this becomes standard Laplace smoothing.

For Bernoulli Naive Bayes, smoothing is similarly applied to binary occurrence probabilities:

θ̂jc = (Njc + α) / (Nc + 2α),

where Njc is the number of documents in class c

where feature j is present.

11. Why Naive Bayes Works Despite Being “Naive”

The conditional independence assumption is often false in real-world data. Words in text are not independent, medical variables are not independent, and business indicators are not independent. Yet Naive Bayes often performs well because classification depends on correct ranking of class scores, not necessarily perfectly calibrated probabilities. Even when dependencies exist, the simplified likelihoods can still preserve a useful class ordering.

In high-dimensional sparse domains, especially text, the signal often comes from many weakly informative features. Naive Bayes can aggregate these efficiently, making it highly competitive despite model misspecification.

12. Probabilistic Interpretation

Naive Bayes is a generative model because it estimates P(x | c) and

P(c), then infers P(c | x). This differs from discriminative

models such as logistic regression, which directly model P(c | x).

The posterior can be normalized as

P(c | x) = [P(c) Πj=1p P(xj|c)] / [Σc' ∈ 𝒞 P(c') Πj=1p P(xj|c')].

In many implementations, however, the denominator is only computed when actual posterior values are needed, not for

simple class prediction.

13. Computational Complexity

Naive Bayes is computationally efficient. Let N be the number of samples,

p the number of features, and K the number of classes.

Training generally requires scanning the data once to estimate counts, means, variances, or frequencies, which is

roughly linear in the dataset size.

Prediction for one sample usually requires computing a class score for each class, which is approximately

O(Kp). This makes Naive Bayes especially attractive for large-scale and real-time classification.

14. Numerical Stability

As already noted, multiplying many small probabilities can underflow to zero in floating-point arithmetic. This is

why log probabilities are almost always used in practice. Instead of computing

P(c) Π P(xj|c), implementations compute

log P(c) + Σ log P(xj|c).

If normalized posterior probabilities are required, one often applies the log-sum-exp trick to improve stability:

if the class log-scores are s1, ..., sK, then

P(c=k|x) = exp(sk - m) / Σr=1K exp(sr - m),

where m = max(s1, ..., sK).

15. Evaluation Metrics

Since Naive Bayes is primarily a classifier, common evaluation metrics include accuracy,

Accuracy = (TP + TN) / (TP + TN + FP + FN), precision,

Precision = TP / (TP + FP), recall,

Recall = TP / (TP + FN), specificity,

Specificity = TN / (TN + FP), and F1-score,

F1 = 2(Precision × Recall)/(Precision + Recall).

For probabilistic outputs, log loss may be used:

LogLoss = -(1/N) Σi=1N Σk=1K yik log pik,

where yik is an indicator for the true class and

pik is the predicted posterior probability.

16. Interpretability

Naive Bayes is one of the more interpretable probabilistic classifiers. The class prior

P(c) reveals the baseline prevalence of each class, and the likelihood terms

P(xj|c) reveal how each feature behaves within each class.

In text classification, for example, terms with high P(term|c) or strong log-likelihood

contributions are interpretable indicators of the class. For a given input, the log-score decomposition

log P(c) + Σ log P(xj|c) lets practitioners see which features contributed most

to the final decision.

That said, the probability outputs can be poorly calibrated because the independence assumption may overstate confidence. So Naive Bayes is often better as a ranker or classifier than as a precise probability estimator.

17. Naive Bayes vs Logistic Regression

Naive Bayes is generative, while logistic regression is discriminative. Naive Bayes models

P(x|c) and P(c), then derives

P(c|x). Logistic regression directly models

P(c|x).

In binary classification, logistic regression uses a functional form such as

P(y=1|x) = 1 / (1 + e-(β0 + βTx)),

while Naive Bayes computes posterior class scores from priors and feature-conditional likelihoods.

Naive Bayes often trains faster and can perform very well with limited data, especially in text domains. Logistic regression often achieves better-calibrated probabilities and can outperform Naive Bayes when the independence assumption is strongly violated and sufficient training data exists.

18. Naive Bayes vs Decision Trees and KNN

Compared with decision trees, Naive Bayes does not learn hierarchical rules such as

if xj < t then go left. Compared with KNN, it does not rely on distance metrics

such as d(x,z) = √[Σ(xj - zj)2].

Instead, it computes class scores directly from probabilities.

This makes Naive Bayes much faster at inference than KNN and often more stable than shallow trees on sparse text data.

19. Advantages of Naive Bayes

- Extremely fast training and prediction.

- Works well on high-dimensional sparse data, especially text.

- Requires relatively little training data for a strong baseline.

- Simple probabilistic interpretation.

- Handles multiclass classification naturally.

- Scales well to large datasets.

20. Limitations of Naive Bayes

- The conditional independence assumption is often unrealistic.

- Predicted probabilities may be poorly calibrated and overconfident.

- Feature dependencies are ignored, which can reduce accuracy in some domains.

- Continuous features may not fit Gaussian assumptions well.

- Zero-frequency issues require smoothing.

21. Practical Use Cases

Naive Bayes is widely used in:

- spam filtering

- email routing

- document classification

- sentiment analysis

- language detection

- medical symptom-based screening

- fast baseline classification for tabular and sparse data

22. Best Practices

- Choose the Naive Bayes variant that matches the feature type.

- Use smoothing to avoid zero probabilities.

- Compute in log space for numerical stability.

- For text, carefully preprocess vocabulary, tokenization, and feature representation.

- Validate calibration if posterior probabilities are business-critical.

- Benchmark against logistic regression and linear SVM for text tasks.

23. Conclusion

Naive Bayes is one of the most elegant examples of probabilistic machine learning. It transforms Bayes’ theorem and a strong simplifying assumption into a practical, scalable classifier that often performs far better than its name suggests. Its strength lies not in perfect realism, but in computational efficiency, robustness in sparse domains, and the ability to aggregate many weak feature signals quickly.

For practitioners, Naive Bayes remains indispensable as both a teaching model and a practical baseline. It provides a transparent view into generative classification, sharpens understanding of priors and likelihoods, and remains a production-worthy choice in text and other high-dimensional settings where speed, simplicity, and interpretability matter.