Abstract

As Artificial Intelligence (AI) systems transition from research prototypes to production-grade platforms, scalability has become a critical engineering requirement. Modern AI applications—such as recommendation systems, predictive maintenance platforms, fraud detection engines, and large language models—must process massive datasets, serve millions of users, and continuously retrain models without degrading performance.

Scalability in AI systems refers to the ability of machine learning infrastructure, algorithms, and pipelines to efficiently handle increasing workloads, including larger datasets, more complex models, higher request volumes, and expanding computational requirements.

This paper explores the concept of scalability in AI systems, discusses its architectural dimensions, highlights common challenges, and presents practical strategies used in real-world machine learning platforms.

1. Introduction

Machine learning systems operate in environments where data volume, model complexity, and inference demand constantly grow. A model that works well on a laptop during development may fail when deployed in production environments handling terabytes of data or real-time inference requests.

For example:

| Scenario | Initial System | Scaled System |

|---|---|---|

| Fraud detection | 10K transactions/day | 10M transactions/day |

| Recommendation engine | 1K users | 50M users |

| Predictive maintenance | 100 sensors | 1M IoT sensors |

| NLP chatbot | 100 queries/day | 1M queries/day |

Without scalable architectures, AI systems experience:

- Slow model training

- High latency during predictions

- Infrastructure bottlenecks

- Increased operational costs

- Reduced system reliability

Therefore, scalability becomes a core design principle in AI system architecture.

2. Definition of Scalability in AI Systems

Scalability in AI systems refers to the ability of machine learning pipelines, models, and infrastructure to maintain performance and efficiency as the workload increases.

Workload growth may occur in several forms:

- Data Scalability

- Handling growing datasets

- Model Scalability

- Managing increasingly complex models

- Compute Scalability

- Efficient use of CPU, GPU, and distributed systems

- Inference Scalability

- Serving predictions to large numbers of users

- Pipeline Scalability

- Managing training, retraining, monitoring, and deployment at scale

A scalable AI system should be able to:

- Process larger datasets

- Train larger models

- Serve more predictions

- Maintain stable performance

- Optimize resource utilization

3. Dimensions of Scalability in AI Systems

Scalability in AI systems can be examined across several architectural dimensions.

3.1 Data Scalability

Modern machine learning relies heavily on large datasets. As datasets grow from gigabytes to petabytes, traditional data processing approaches become inefficient.

Challenges

- Data storage limitations

- Slow data processing pipelines

- Memory constraints

- Data ingestion bottlenecks

Strategies

Distributed Data Processing

Frameworks such as:

- Apache Spark

- Hadoop MapReduce

- Ray

- Dask

allow parallel processing of large datasets across clusters.

Data Partitioning

Large datasets are divided into smaller partitions that can be processed independently.

Data Lakes

Storage platforms such as:

- Amazon S3

- Google Cloud Storage

- Azure Data Lake

enable scalable data storage.

3.2 Model Scalability

As models grow in size and complexity, computational requirements increase dramatically.

For example:

| Model | Parameters |

|---|---|

| Logistic Regression | thousands |

| Random Forest | millions |

| BERT | 110M |

| GPT-3 | 175B |

Large models require specialized techniques to scale training and inference.

Strategies

Model Parallelism

Different parts of a neural network are trained on different devices.

Example:

Layer 1 → GPU 1

Layer 2 → GPU 2

Layer 3 → GPU 3

Data Parallelism

The dataset is split across multiple GPUs or nodes while each device trains a copy of the model.

Parameter Sharding

Large model parameters are distributed across machines.

3.3 Compute Scalability

AI systems must efficiently utilize computational resources.

Compute scalability involves scaling:

- CPU clusters

- GPU clusters

- TPU infrastructure

- Distributed training environments

Horizontal Scaling

Add more machines.

Example:

1 server → 10 servers → 100 serversVertical Scaling

Increase resources of a single machine.

Example:

16 GB RAM → 256 GB RAM

1 GPU → 8 GPUsCloud platforms enable both approaches.

Examples:

- AWS SageMaker

- Google Vertex AI

- Azure ML

3.4 Inference Scalability

Once a model is deployed, it must serve predictions to users with low latency and high throughput.

Example:

| Application | Latency Requirement |

|---|---|

| Autonomous driving | <10 ms |

| Fraud detection | <100 ms |

| Recommendation systems | <200 ms |

Challenges

- High request volume

- Real-time predictions

- Resource bottlenecks

Strategies

Model Serving Infrastructure

Tools include:

- TensorFlow Serving

- TorchServe

- FastAPI

- Triton Inference Server

Load Balancing

Requests are distributed across multiple servers.

Batch Inference

Instead of predicting one request at a time, predictions are grouped.

Caching

Frequent predictions are cached to reduce compute costs.

3.5 Pipeline Scalability (MLOps)

Machine learning pipelines must support continuous retraining and deployment.

Typical pipeline stages include:

- Data ingestion

- Data validation

- Feature engineering

- Model training

- Model evaluation

- Model registration

- Deployment

- Monitoring

Scalable pipelines require automation tools such as:

- Kubeflow

- MLflow

- Airflow

- GitHub Actions

- Jenkins

This enables CI/CD for machine learning (MLOps).

4. Architectural Patterns for Scalable AI Systems

Several architectural patterns support scalability.

Microservices Architecture

AI components are deployed as independent services.

Example services:

- Feature service

- Model inference service

- Monitoring service

- Data pipeline service

This enables independent scaling.

Distributed Training

Large models are trained across clusters using frameworks such as:

- Horovod

- DeepSpeed

- PyTorch Distributed

- TensorFlow Distributed

Feature Stores

Feature stores allow scalable management of machine learning features.

Examples:

- Feast

- Tecton

- Vertex AI Feature Store

Benefits include:

- Feature reuse

- Real-time serving

- Consistency between training and inference

5. Real-World Example: Predictive Maintenance

Consider an AI-powered predictive maintenance system used in fleet management.

Initial Prototype

- Dataset: 50 MB

- Sensors: 20 vehicles

- Model: Random Forest

- Training time: 5 minutes

Production System

- Dataset: 10 TB

- Sensors: 500,000 vehicles

- Model: Deep neural network

- Training cluster: 200 GPUs

- Inference: millions of predictions/day

Scaling strategies include:

- Distributed sensor data ingestion

- Feature pipelines using Spark

- GPU-based distributed training

- Containerized inference services

- Auto-scaling cloud infrastructure

6. Challenges in Scaling AI Systems

Despite advanced tools, scalability introduces several challenges.

Data Engineering Complexity

Managing massive datasets requires robust data pipelines.

Infrastructure Cost

Large-scale training can be expensive.

Example:

Training a large language model may cost millions of dollars.

Model Latency

Large models can slow down inference.

Monitoring and Reliability

AI systems must monitor:

- model drift

- prediction errors

- data distribution shifts

7. Best Practices for Building Scalable AI Systems

Successful AI systems follow several design principles.

7.1. Start Simple

Begin with smaller models before scaling.

7.2. Use Distributed Processing

Adopt distributed frameworks early.

7.3. Automate ML Pipelines

Implement CI/CD pipelines for ML.

7.4. Monitor Model Performance

Track accuracy, latency, and data drift.

7.5. Optimize Resource Usage

Use GPU acceleration and model compression techniques.

8. Future Trends in AI Scalability

Several emerging technologies are improving scalability.

Model Compression

Techniques such as:

- pruning

- quantization

- knowledge distillation

reduce model size.

Serverless ML

Serverless inference platforms automatically scale.

Hardware Acceleration

Specialized hardware such as:

- GPUs

- TPUs

- AI accelerators

improves scalability.

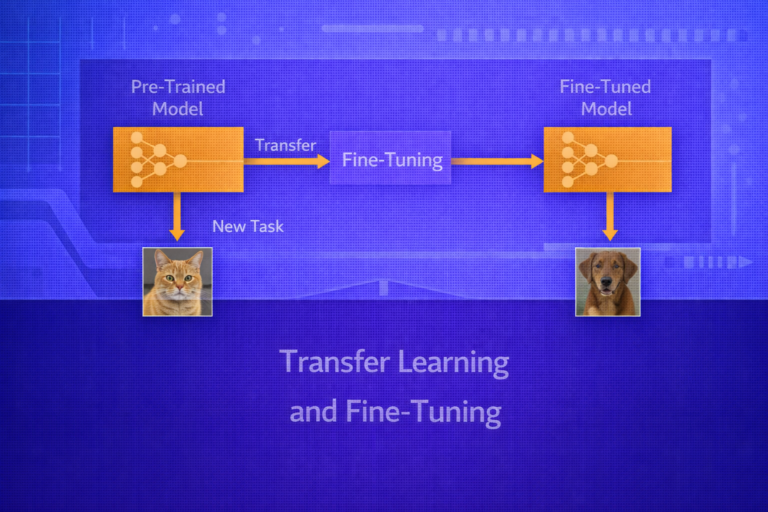

Foundation Models

Large foundation models are shared across tasks, reducing training costs.

Conclusion

Scalability is a foundational requirement for modern AI systems. As machine learning applications grow in complexity and adoption, organizations must design architectures capable of handling massive datasets, complex models, and high-volume inference workloads.

Achieving scalability requires a combination of distributed data processing, scalable model training techniques, efficient inference infrastructure, and automated MLOps pipelines. By adopting these strategies, organizations can deploy AI systems that remain efficient, reliable, and cost-effective even as their workloads expand.

In the future, advances in hardware acceleration, distributed computing, and model optimization techniques will continue to improve the scalability of AI platforms, enabling the development of increasingly powerful and intelligent systems.